Helping customers’ success, through data: Rebuilding Asana’s Account Health Score

Three years ago, Asana created a critical metric that changed the way our Customer Success (CS) team did its work. The Account Health Score (AHS) is that metric, and it does exactly what its name suggests; it’s a measure of how well a team on Asana is doing. This value on a 100-point scale is computed by combining a short list of engagement metrics. In theory, one could look at the resulting score each day to see whether a particular account was doing well, and our CS team relied on it as a way to determine which customers were struggling with Asana.

While the AHS was central to many internal workflows, the data science team wanted to make it better. The project of improving it aligned well with our core areas of focus, since a key part of cultivating data wisdom is ensuring that everyone is empowered with the right data and the tools to make use of them. Improving AHS was an opportunity to build a large machine learning-based system, and that excited us. So we met with key stakeholders to discuss the flaws of AHS and developed a new metric: the Account Health Score 2 (AHS2). In this post, we’ll cover the major design decisions involved in creating AHS2 and explain how each of the shortcomings we sought to overcome shaped the finished product.

Responding to declines in health sooner

We want to make sure our customers get the most out of product. The AHS score was a proxy for their success, but in its original iteration it was a lagging indicator. Our CS team needed a metric that told them when a customer was starting to struggle, so that they could step in and help proactively.

To make sure the AHS2 would meet their needs, we discussed their workflow and process of reaching out to users. How did they decide whom to contact? How much advance notice would be enough to turn around a struggling customer? As a result of these discussions, we settled on much of the basic structure for the AHS2.

The AHS had been a relatively simple heuristic, but in order to anticipate our customers’ behavior better, we decided that the AHS2 must be a classifier trained on historical customer churn data. Because we gathered from our conversations that at least one to two months’ notice would be ideal for preventing an account from churning, we would label accounts as “churned” eight weeks out from their historical churn date. Those who were less than four weeks from churning were included in the training set with a churned label, but weren’t used when scoring the model, since they were cases the model might easily predict but that would likely be too close to churning to rescue.

The scoring process itself was also shaped by these early discussions. CS has a finite amount of time each month they can allocate to reaching out to unhealthy accounts, so they start with the highest risk accounts. By taking this real-life limitation into account when scoring our model, we could make sure that it was rewarding accuracy wins that would translate to real-life business wins. For this reason, we chose to evaluate the model using top-decile lift, which is the increased probability of choosing a churner given that we consider only accounts with the lowest 10% of AHS2 scores rather than all accounts. While top-decile lift is a pretty standard churn metric, 10% of all customers also approximately matched the number of accounts our CS team could reach out to in the time window in which low-scoring accounts would churn.

Using a wider variety of data as inputs

When the AHS was created, the data program at Asana was in its infancy. Since then the available data has expanded tremendously. Once limited to visits and records of core actions, we now have data about things like how many employees work at the companies that pay for Asana, whether the account’s users are concentrated within a single geographical region or spread across many, and how reliably payments are made. But the AHS algorithms remained nearly unchanged, continuing to use variations on the same four engagement inputs. For many accounts, a graph of their AHS over time was nearly indistinguishable in shape from a graph of Asana’s monthly visitors over time. Though this gave the impression that the AHS was providing trustworthy information, it made little sense to have two nearly identical graphs taking up prime dashboard real estate.

With the AHS2, we set out to change that. While we didn’t want to add complexity for its own sake, we wanted to make sure that we were taking full advantage of the possible inputs at our disposal. We started researching how others were approaching this problem and created a shortlist of approaches. In selecting from that list, the biggest contributor to our decision was a desire to take full advantage of the data at our disposal. This led to our decision to choose a variant on a random forest model, which is robust for large numbers of inputs compared to many other classifiers. It also motivated us to create additional datasets that we had discussed in the past, but we never made time to build. Under the banner of the AHS2, we were able to carve out time to code up processors for things like daily statistics about user sessions and users championing Asana to their coworkers, and start them running with our nightly pipeline. Many of these features actually came as a direct result of our partnership with the CS team. They regularly provided us with qualitative indicators of account health that they’d learned from their time interacting directly with customers, and we would look for a way to quantify them given the available data.

Seeing how much of a difference these new inputs could make on the performance of the model, we also worked to create a flexible system of model configurations that could easily train a new model with additional inputs. Our hope is that this will allow us to regularly incorporate new data into the AHS2 as it becomes available, preventing us from reaching a point where the high-level measure of account health is years behind the rest of our data.

Making the final score have real world meaning

Over time, teams who regularly used the AHS grew to understand how scores map to real world outcomes. Outside of those teams, however, it was only really known that high scores were better than low scores. We wanted to create a replacement that would allow more people to use this tool without a lot of time spent learning how to interpret it.This would both help its adoption on a broader range of teams and speed up on-boarding for the teams that were already AHS consumers. We also didn’t want to break the existing workflows of people who were used to the AHS being a number that could be tracked over time and where high scores indicated good health. With these constraints in mind, reporting on the AHS2 as a probability of retention seemed like a natural choice. This both has an intuitive real world meaning and is mathematically quite useful, in that it can be used in any of the numerous calculations that ask for a probability, including computing things like the expected value of revenue lost to churn.

We initially considered using a logistic regression or some other classifier that would output a probability by default. However, after considering the previous goal of employing a wider range of data, it was clear that a logistic regression wouldn’t be able to handle the number of inputs we were interested in including.

This left us with a few other options. One possibility would have been to provide a single prediction—will a team churn or not—but this didn’t align with the goal of empowering teams to keep their existing workflow of tracking the numerical score over time. We also could have reported the fraction of trees predicting churn, which was at least a number that could be followed over time, but it was even farther from being grounded in a real world meaning than the AHS had been.

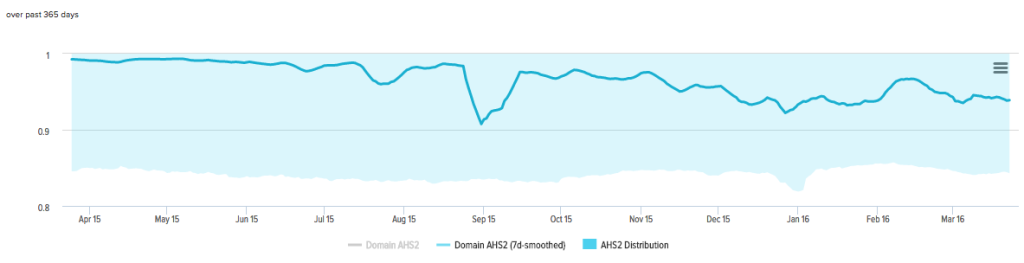

We chose to map the number of trees predicting churn to an actual probability. To do this, we fit a probability distribution on top of our AHS2 scores, using the fraction of accounts that churned per AHS2 score as our sample probabilities. We report on this number in any place where we were replacing the AHS; it’s this probability, and not the raw output of the model, that we refer to outside of the data team as the AHS2.

Providing context on why the score was low or high

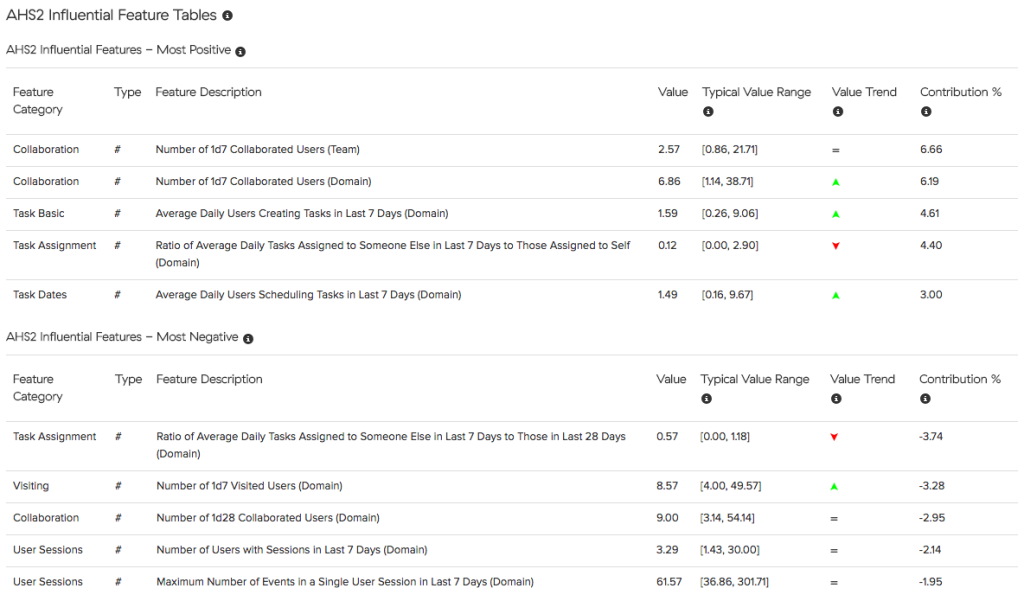

The final request we received was more information about why an account was doing poorly, since teams often felt at a loss when reaching out to “unhealthy” customers. We immediately thought of doing something related to feature importances, which can be used to understand which features are most contributing to the separation of different classes in a random forest. However, feature importances, as they are typically calculated, provide information about which features are most important overall, and we wanted information on a per-customer basis.

The solution we chose used the entropy changes that are typically used for computing feature importances, but instead of tracking them across entire trees, we tracked them through the path on each tree taken by a single account. Features that had high entropy change values and were overwhelmingly found on trees predicting non-churn were said to be the most positive influential features, while those with high entropy change values that predicted churn were said to be the most negative influential features.

These were presented in a table on the customer dashboards with some information about the features, including the current value of the feature, a typical value range for accounts of a similar size, and whether it had gone up or down over the past week. The math doesn’t always work out perfectly in trying to convert a complex, path-dependent system into a single importance number per feature. For instance, there is a lot of noise for features with only very low entropy change values. Even with that caveat, it does an excellent job of suggesting a handful of strong and weak areas for each account.

Conclusions

The development of AHS2 is the first machine learning project of this scale taken on by Asana. It could easily have gone the way of many well-intentioned initial forays into predictive modeling by become a large and expensive data toy that was overfit to the training data, or one that was highly accurate at predicting something no one needed. Because of goals and constraints that were established early on, and strengthened through regular meetings with stakeholders, we avoided that negative outcome.

We were able to build a tool that was fun to create, and importantly, genuinely useful for its end users: the AHS2 is 20–50% more likely to catch at-risk accounts than the AHS was (depending on the kind of account).

We eventually hope to expand the ways that AHS2 is used. We’re building new alerts around the AHS2, equipping CS with what they need to successfully reach out to unhealthy accounts. We’re expanding to supplement this with more automated outreach that can provide actionable insights to customers directly.

From there, we hope to start thinking about health and retention beyond Premium accounts. We could use it to do things like generate sales leads or help with revenue projections. Most importantly, any improvements will allow us to do a better job of serving our customers by highlighting places where individual customers can get more value out of Asana as well as common pain points that many customers share.